AI Is Hungry for Power. Are Your Transformers AI Ready?

When the first generative AI platforms stunned the world with what they could do, the reaction was a mixture of awe and curiosity. But a wave of realization soon followed: this technology would demand a lot of energy.

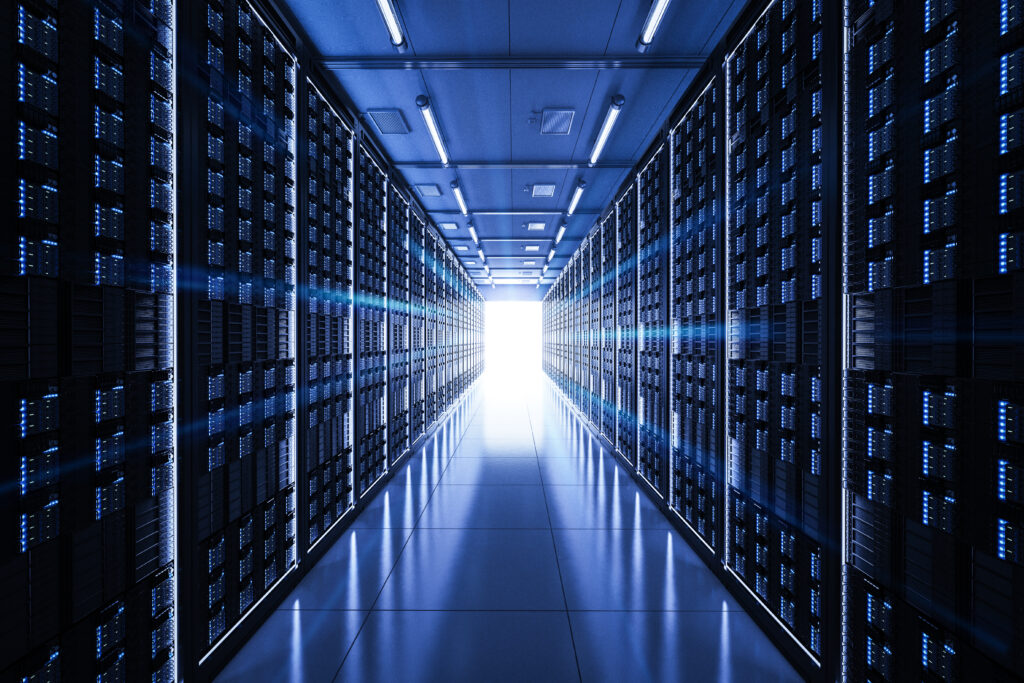

Behind every chatbot conversation, image generator, or recommendation algorithm is a vast network of servers. And behind those servers? A web of power infrastructure hard at work, much of it invisible to the end user. That includes the transformer cores that make high-speed, high-volume computation possible.

As AI accelerates, so does its appetite for power. So the question becomes: are our transformers AI ready?

How Do Transformers Fit Into AI Data Center Power Delivery?

Transformers help regulate and reduce high-voltage electricity from the grid to usable levels needed by data center equipment. Their cores, made from carefully stacked and shaped electrical steel, determine how efficiently and reliably this power is delivered.

In AI data centers, the role of transformers is magnified. The demand is not only higher, but also more variable. Transformers must help maintain consistent voltage, minimize energy loss, and manage thermal loads, all while operating in environments that can draw tens or even hundreds of megawatts of electricity.

When it comes to AI data centers, the design and quality of the transformer cores supporting them are incredibly important.

Unique Power Demands of AI vs. Traditional Data Centers

We already know that traditional data centers are power-hungry. But AI data centers? They’re on an entirely different level.

While a conventional server might draw approximately 500 watts, AI-focused servers equipped with GPUs or TPUs can require far more. Now imagine that multiplied across a facility filled with racks of these high-performance computing units. That power draw adds up fast.

The nature of AI workloads also introduces new stresses. Training AI models is time-consuming, with long periods of constant, high power draw. Even the most robust systems would feel the strain because these loads are heavier and less predictable. That kind of power variability places pressure on transformers to regulate voltage in real time without failure or significant energy loss.

Cooling systems, too, are pushed harder. As data centers transition from air cooling to liquid or immersion cooling, transformers must maintain stable performance despite increased temperatures and tighter physical constraints.

The reality is that what worked for yesterday’s data center isn’t enough for tomorrow’s AI infrastructure.

What It Takes to be “AI-Ready”

AI-ready transformers need to deliver on their promise of consistent performance under high and constant pressure. Key characteristics include:

- Low core loss: AI servers run hot and often continuously. Transformer cores with low core loss reduce heat generation and energy waste, supporting lower operating costs and higher reliability.

- Thermal stability: Heat is one of the top causes of transformer degradation. AI-ready cores must be able to manage thermal expansion and resist breakdown.

- Compact scalability: As facilities grow and floor space tightens, transformer cores need to deliver the same level of performance in smaller footprints.

- Material quality: High-grade electrical steel, precision slitting, and advanced stacking techniques directly influence transformer performance. If the material and assembly are managed well, then the output will be more consistent.

How Corefficient Supports Power-Hungry Infrastructure

At Corefficient, we’ve always known that core design drives performance, and as AI becomes more and more demanding, that focus has become even more critical.

Our manufacturing process is designed to deliver transformer cores that perform under pressure. We start with high-quality electrical steel and apply expert slitting and shaping processes to reduce variability and maximize magnetic efficiency. Our stacking and assembly techniques ensure optimal alignment, minimizing core loss and improving thermal performance.

Whether you’re building large-scale power transformers or compact, high-efficiency units, Corefficient delivers cores designed for today’s power demands as well as for tomorrow’s AI-driven future. Our team works directly with OEMs and power system engineers to provide solutions tailored to your application, with a goal of making sure you’re not just keeping up with AI, you’re staying ahead of it.